Last week we invited you to lob your questions regarding autonomous cars at AUT professor Reinhard Klette, New Zealand’s foremost expert on the subject. Today he responds to a selection of the many questions he received, and explains why driverless cars might not be as close as you think.

Professor Reinhard Klette, former professor at the Academy of Sciences in Berlin, is New Zealand’s top mind when it comes to autonomous cars. Head of AUT’s Centre for Robotics and Vision (CeRV), Prof Klette has more than 450 publications across fields such as computational geometry, computer vision and computational photography.

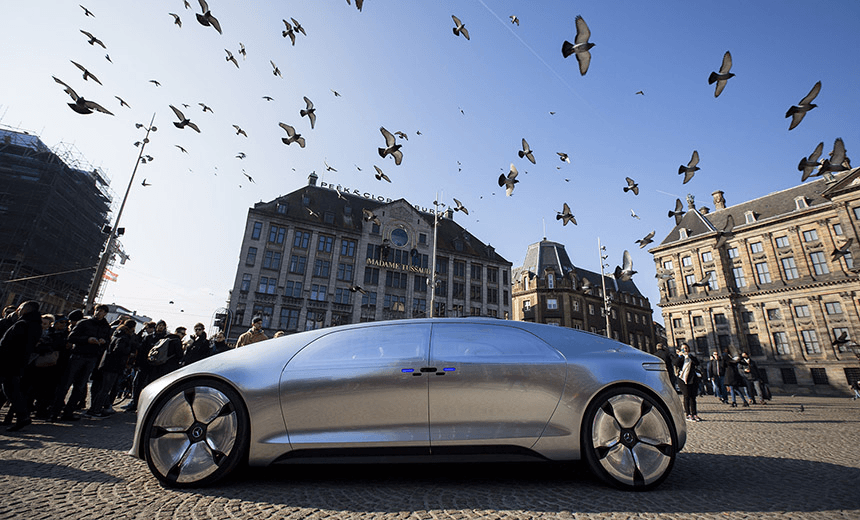

With international experience working with firms such as Mercedes, and now with more than 25 PhD students successfully graduated under his supervision, Prof Klette has seen the transition from a time when the idea of cars without drivers was a pipe dream to autonomous vehicles becoming an inevitable reality, and is thus best placed to answer questions on everything from how they work to who dies when they crash – who’s responsible?

The Spinoff: When it comes to getting these cars on the road, are we talking hardware problems or software problems?

Prof Klette: Originally I think it was a software problem because it was necessary to show that the concept actually works. One important component is stereo vision. Around 2000, 2005, stereo vision was still at the level where we were still very skeptical, and couldn’t see it working in car. But around 2006 things happened in the field, new algorithms were developed, and we started to think ‘yes, it’s possible to have computer vision at the speed of 30 frames a second, and it’s possible from that to understand distance to other objects, in 360 degrees’.

Then it became a hardware problem, because every camera only has a limited viewing angle, which is a challenge. Fish eye cameras come with very bad geometry, so you need to have a high number of cameras pointing in many directions, or rotating cameras, or ones like the Google street view camera – multiple cameras in one piece together – with which you can generate panoramic views. But how do you hide that in a car? People don’t like to drive around with a little tower on top of their car. The cameras needed to look like the head of a pin, a little pin somewhere.

People also said ‘you can never have computer vision in a car because it needs too much power. You cannot drive a PC around.’ At this point hardware developments took over, and at the end only around two watts were needed to run stereo vision in a car.

Are designers and manufacturers looking towards dual-functionality or removing the steering wheel altogether?

Long term we’re probably talking about the steering wheel and everything being gone. In an airplane you switch to autopilot and the pilots can have a rest for long periods of time. But there are no obstacles in the air, it’s much easier. Here we have all these sudden events. I think for quite a while it is important to have a driver, a person in charge, so you can understand from your environment whether you should have an eye on it, or to take over the steering wheel.

Say you are driving on a highway for hours, it’s boring, just straight, the lanes are properly marked, and suddenly comes a construction zone or something happens, an accident, so there’s a change of complexity. The driver, because they’re certainly concerned about his or her life, will say ‘OK I better take over and take care of myself’. This driver scenario will dominate for quite a while, even with high-end driver support.

In terms of a transition, would there be an issue with, say, 20% of the fleet being autonomous and 80% with humans behind the wheel making human mistakes?

Yes, there’s an issue. Just this weekend there was an accident with an Uber, an autonomous car in Arizona where the problem car was one with a driver. The Uber car was driving according to the rules, but the other one took the right of way of the autonomous car, so now Uber have made the decision to stop all their autonomous cars because they have to understand if it’s possible for the sensors to detect these crazy drivers. The problem was not with the autonomous car, but with an autonomous component you might not be able to have a good estimate of when a dangerous situation is developing and whether you should stop. The brake is always in the car. We don’t have to continue, braking is the best way of safety in many cases.

So for some time certainly modern autonomous cars have to coexist with driver-driven cars. Probably forever, because what about all these wonderful old-timers? Will we not go from time to time back on the road? People will want to show off their 100 year old car. We already do it now, you see cars from 1904 on Tamaki Drive, so this will happen. We have to coexist. But also the autonomous cars have to learn that there are still these horses running around.

How could you implement a system to ensure that the communications between cars were consistent?

Things like communication between cars, vehicle-to-vehicle communication, vehicle-to-infrastructure communication, this is very, very quickly becoming a valuable topic, because it’s starting to be implemented already. I’m actually surprised because I thought there would be many safety concerns, hacking, things like that, but it’s already implemented. The cars are starting to communicate. Communication is already starting to be a reality, but I see big issues with this software safety.

And similarly so with the cars themselves, right? If a car can be hacked, maybe all of a sudden the brakes stop working, or the car drives itself into a wall or off a bridge.

Definitely. There are already very famous examples of people who have been involved in the development of modern cars were saying ‘Oh our cars can’t be hacked into, they’re safe,’ but you can see examples on YouTube where hackers have put them inside, and then on the motorway the car slows down to 10km/h, the windows are going up and down, the indicators are going on and off, the radio is turning to different stations, the sound is going up, and the driver has no chance to interact with the car anymore.

There are enough people in the world of technology who are keen to have new experiences, new adventures, so whenever you offer a new game someone will jump in. It will happen. The more you have these new technologies, the whole world becomes a security risk. And it will probably be a nice new job opportunity for people – security experts for modern cars.

What about servicing these cars? Will a mechanic be able to service them? What about self-servicing?

Self-repairing robots we’ll leave to the future, but with new technologies you’ll probably have to bring your car to a company specialising in this product. It’s very difficult now to go with a software supported car to a normal mechanic and say ‘check it out’, they have to have the proper analysis software already for testing the car, but on the other hand by having no combustion engines anymore, somehow the workshop will also have to change, the ‘workshop of the future’.

When we have only electric motors and batteries, the combustion engine complexity is gone, and this will probably happen in the next twenty years or so in new cars. On the other hand the complexity of the IT component in the car will increase. There will be new jobs and new opportunities. Mechanics will at least have to know how to run a package of software on the car to have a nicely designed interface say ‘oh the lane detection module is faulty in this car’.

What about governance and regulations around the software these cars are running? If you’ve got 20 manufacturers, they’re not all going to use the same software.

Also a very important and interesting subject, and I have no answer for that. We already have part-autonomous cars driving around, Teslas and so on, and the law needs to take care about these cases. I think right now the solution is simple, everyone buys a car and behaves like the driver, and they are in charge. But in the future, if cars drive around and no human is inside the car anymore, autonomous cars of course can do that, then who’s legally responsible when something happens? The developer? The owner? The people providing the communication infrastructure?

This should be the highest security level. Why do we have autonomous driving? There are two main reasons. One is safety, reducing traffic accidents and the negative impacts of traffic related accidents. The other main reason is efficiency. Our roads are not coping with the demand and we have to find the traffic system of the future where more people can drive safely in the provided spaces. Autonomous cars can drive, as has been shown with trucks in Germany, in platoons with a constant distance of, say, one metre between them, and so the road space is used more efficiency.

What about the Trolley Problem? You’re barreling down on a crowd, and you’re going to kill someone, and there’s an old lady, some teenagers, and a mother with a pram. Who gets to decide who lives and dies?

First, this will luckily happen very rarely. But you will have to make a decision in a fraction of a second. And then you can imagine someone needs to verify that the car is programmed in the right way. Maybe this creates another type of job, a ‘software safety verification agency’ in the government, deciding on new rules.

Right now the driver rules are easy. We believe every human has similar ethical concepts and we ask only about the right of way. For the driver exams in the future, we have to ask these questions with autonomous cars – are they properly programmed – and also the ethical questions. Are the cars set for what the normal driver implicitly assumes? When we do the driver test now nobody is asking you do you value the life of a baby higher than that of a retired person? So are we deciding already? Is there already a legal concept for that?

You’d have to program the car with some sort of hierarchy right? Someone at some point would have to make a call on what we value more, and then rank them, so who would decide?

The first thing is to avoid any conflict. There’s a brake in the car, and whenever something appears to happen, stop the car. That’s the best decision you can make. And it’s a decision cars already make – when people use the brake they normally hesitate to push it strongly, and that’s why modern cars have assisted braking. But if nothing is possible anymore I think this is a question for philosophy. They can talk about it for ages. But it will not stop the process. Autonomous cars are coming. We cannot say ‘Oh but there might be a school bus of kids and on the other hand some retired people, and in the car are a few teenagers, who is more important?’ This will not stop the process.

So when are they coming?

Not earlier than 30 years. I see some people have said on TV ‘we’re doing it very quickly, in the next half a year, one year,’ but I think they are talking about environments where everything is appropriate. Highways with proper lane markings and so on. Not about snowstorms or rain in the night or other weather conditions which are also possible for autonomous driving. There are certainly limits to what we can do right now. For example I was in one city in Mexico and all the traffic in the inner city is underground in tunnels. This creates a special environment and when people talk about autonomous driving they might not have this in mind.

Being adaptive to different environments is one of the research topics. It’s like having a toolbox in your car, different methods for dealing with situations, and according to the current situation you have to select the appropriate tool. Your stereo vision program for one scenario might be not the best stereo vision that you should use right now. The scenario can be defined by having objects very close to you, being in the night, having the wipers going, all this can influence. By the way, wipers – why do we need wipers if they’re autonomous? It is a very big social change.

You’d also get to the point where, if the rate of incidence drops to almost no crashes or deaths, it’d be pretty unethical to want to take your old Skyline out on the road.

Yes. Probably for particular driving experiences we will need more and more areas dedicated for that. Like with horse riding you have special places where you can still ride a horse, but you cannot do it here in Aotea Square. We’ll need more race courses or driving tracks for taking your favourite old-timer car out for a ride.

But looking into the future, 50 or 100 years, what does it mean for autonomous driving? I imagine that public transport becomes again much more important. Then it’s about comfort. People will want to travel in comfort and they won’t like to sit in a little box without interaction. When you’re not needed anymore as a driver, you can use the time to interact with other people or to work or to read, and you can do that much better in a larger public space like a train or in a bus.

So it’s less likely there’ll be a million Google Earth cars and more likely we’ll have electric road trains?

Yes, like the platoon driving. So one platoon appears like a road train. You merge into your platoon, maybe up until this moment you’re driving, then you switch it into autonomous mode and you are part of the platoon. New technology offers a lot of options for having new experiences. Windows won’t be needed anymore for a driver. You can use the window like a screen for showing, like you have your virtual reality that you’re now driving through the Sahara in Africa.

Right now cars are designed around the driver, but if there’s no driver anymore you can have your seats in a circular fashion or you can pick whatever you prefer. The basic concept of having wheels and a door and the roof will remain, the rest is up to the imagination of the designers.

This content is brought to you by AUT. As a contemporary university we’re focused on providing exceptional learning experiences, developing impactful research and forging strong industry partnerships. Start your university journey with us today.