The only thing more volatile than the polling is the commentary around the volatile polling. Statistician Richard Arnold tackles some of the critical questions.

We love them, we hate them, and they have a greater impact on our political system than many would like to acknowledge. The problem is, polls are statistics, and people as a whole are generally pretty atrocious at making sense of statistics. Otherwise we wouldn’t ever play Lotto or assume that butter destroys marriages based on how divorce rates track with margarine consumption.

Statistics is a big field of study. Statistically one of the biggest. There’s a lot to get through. With opinion polls all over the headlines, Dr Richard Arnold, associate professor of statistics at Victoria University of Wellington, fields some questions about how they can work, and how they can fail.

When a poll comes out that isn’t in favour of a particular party, their supporters will often try to pick holes in the polling methodology itself. Are those valid concerns?

There are a few ways a poll could be wrong.

The first could be that people lie. They don’t necessarily want to say they’re going to vote for something unpopular. People thought this might have been a real effect with Trump, although people responding in phone polling with a live interviewer compared with automated machine voice recognition polling over the phone. So that would suggest the “Shy Trump” effect wasn’t real.

In the US in their presidential election, they had the problem of low voter turnout – only 56%. That means there’s around a one in two chance a person being polled isn’t actually going to vote. In New Zealand, our voting rates are higher – 78 percent in 2014 – and that means our polls aren’t as badly affected by that.

Another big problem for a poll can be that the people selected for the poll may not be typical. The problem is most phone polls are done by dialling landlines and many people are not reachable that way. So if the people who aren’t reachable by landlines vote significantly differently to the people who are then you end up with a biased result. In the past that meant an underrepresentation of poorer people who didn’t have a phone. Now it’s more likely to mean missing the views of younger people.

Is there any particular reason why polling companies don’t account for that?

They do some accounting for it by making sure they have proportionate numbers of male and females, by region, and by age group. The fact that you get fewer young people because they’re harder to reach by landlines may mean you need to work harder to get those people, but still you’re not getting all the people.

Some pollsters have argued they’ve tried using mobile phones as well and haven’t found much difference, but it’s not a good enough answer if there is a risk that people who aren’t reachable by landline are different. You can adjust all you like by age and sex, but it may be that, for example, left-leaning young people are less likely to be reachable by landline than right-leaning young people, which means that you still haven’t captured the heterogeneity of that group. Sample size is rarely the problem, but the problem is the sample can be a biased selection of the population. Polling companies can calculate the margins of error properly, but unfortunately they’re wrapped around a biased estimate.

Margin of error often comes up when people are looking at polls, either people saying it could be closer than it looks or joking that some parties are polling lower than the margin of error.

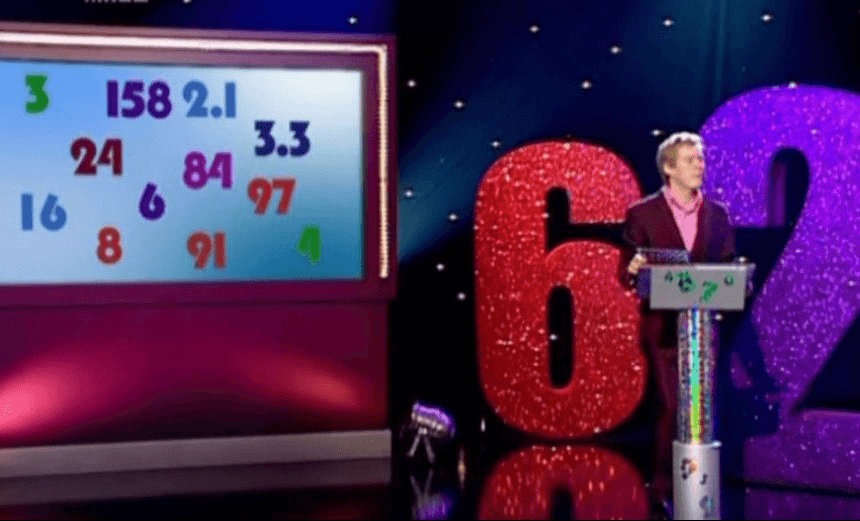

It’s a meaningless statement. The margin of error follows this equation.

MoE = The margin of error

p= Your chosen party’s result converted into a decimal

n= The sample size (most of the time it’s between 850 and 1000 people)

So if your party is polling around 50%, you’ll be getting a margin of error of around 3.2%. But if you’re polling at around one percent, then your margin of error is going to be about 0.6%.

What should polling companies be doing to make sure they’re getting accurate results from their calling?

They’ll use random digit-dialling, using the lists of residential phone numbers, to ensure every residence has a chance of being called. They’ll also randomise within the household, usually by asking for the person who has the next birthday coming up. That makes sure they don’t only hear from the chattiest people in the household – the ones who like to answer the phone before anyone else.

If they’re a reputable polling company, they’ll be re-ringing numbers they couldn’t get hold of. They’ll try calling a few more times at different times of the day. Disreputable companies will just move on to another phone number if they don’t get any answer the first time. Essentially, you want to turn non-contacts into contacts.

But what about mobile phones?

Using mobile phones for polling is a real problem. First of all, there’s no list of numbers. There’s no directory and so when you’re dealing with a seven-digit number you have over 100 million possible combinations. In a country of 4.5 million people, most of those numbers won’t have someone at the other end. Just think of the number of SIM cards being pumped out for groups like tourists; there are just too many of them and none are classified. I doubt if anyone does random-digit dialling for mobile phones. Another solution used by some companies is to collect mobile phone numbers from the other face-to-face and landline surveys they run. This is problematic for several reasons. Firstly, those mobile phone numbers are only from people who both have had the time and inclination to respond to a previous survey, and have consented to be re-contacted on a cellphone. Not exactly a typical subset of the population. Customer databases from retailers and other companies are another possible source of phone lists – but we have to ask who such people really represent.

Another problem with using mobile phones is many people might have more than one phone, which doubles their chance of being included. People have business lines, which can be excluded by polling companies using landlines, but that’s impossible for mobile phones.

In the future, landlines are likely to decline, which presents a big problem for polling. Some companies, such as Reid Research have introduced an online component (25%) to complement landlines. But is there an ideal polling methodology?

The gold standard for performing a survey of the New Zealand population is to door-knock on permanent, private dwellings. This is what Statistics New Zealand does. The reasons for this are partly historical, going back to times when not everyone had landlines. Door-knocking is expensive and slow, especially if you have to send people back to places if no one was home. All our unemployment figures, social and household figures, are all collected through this manner. The fact that our official statistics agency still does this is an indication this is the only way to get numbers good enough for government.

And what about online polls like we see on news sites?

Terrible. Absolutely terrible. Here’s the acid test for whether a polling method is going to introduce bias. If the pattern of responses you see is in any way related to the method of selection, then there’s the problem of bias. The only reason you’d participate in an opt-in poll is because of the response you’re going to give – you most likely feel strongly one way or the other. There’s a 100 percent correlation between the response and the collection method.

Ideally with polls, you need to give everyone a chance of being selected. The chance doesn’t have to be equal, it just has to be known and can’t be zero.

What does the future look like for polling?

It just may be that in the future we have less diversified electronic identities. Initiatives like RealMe may come along where the different ways of authenticating ourselves are all tied together, and that might make sampling the population a lot easier. This would be difficult for private polling companies to get access to, although it might also be the case we all surrender our privacy to the extent it’s no longer something we object to.

So, considering methodology and circumstances around polling, how much stock should we actually put into them?

In general, our polls haven’t been badly wrong, because we have high turnout, and we have a methodology that’s reasonable. These polling companies that do New Zealand polls do relatively well at matching the truth. And in polling, as opposed to market research, you do actually get the election result. So we may not need to be too worried about methodology now but it’s likely to become an increasing problem as time goes on.

The Spinoff’s science content is made possible thanks to the support of The MacDiarmid Institute for Advanced Materials and Nanotechnology, a national institute devoted to scientific research.